Rapid Testing: Dev/Test handovers reinvented4 min read

TL/DR: Download the Playbook and get playing: https://syrett.blog/download/rapid-testing-playbook/

Towards the end of last year, I began working with a new team. Unlike most teams I’ve worked with in recent years, the divide between the Developers and Testers was large and was causing much tension. I wanted to find a way to bring them together and begin working more collaboratively. This post is a story of that journey so far.

In the beginning

When I first joined the team I identified a number of issues and inefficiencies, namely:

- Lots of testing work being carried over at the end of each sprint

- Bugs being found weeks after the development work was complete

- Lots of context switching

- Developers and testers not talking about what should be tested

- Developers and testers repeating similar tests

If these challenges sound familiar, read on.

Baby steps

To address these issues I wanted the developers and testers to start working more collaboratively.

We started by running a DoD workshop to get all the team members to agree on a common Definition of Done for each story.

We then removed the “Dev” and “Test” columns from the scrum board and introduced an “In Progress” column and a Work in Progress limit. I explained to the team that until the DoD had been met, the story was just “in progress” and that team members should work together to get stories completed, rather than picking up new ones.

After a few weeks, I could see that the changes had made some small improvements, but the WiP limit was being regularly exceeded and Developers and Testers were still working largely in isolation. I wanted Developers and Testers to work more collaboratively and discuss testing earlier, rather than it being an after-thought.

Dev/Test handover

I had spoken with the team about the importance of effective “Dev/Test handovers”, where a Tester would work with the Developer to review the changes and perform some exploratory tests together on the Developer’s local environment, helping to find bugs sooner.

I could see the Testers were trying to engage with the Developers but their lack of knowledge of the business requirements and the technical implementation was getting in the way. I knew I wanted the team to conduct better refinement sessions, but they weren’t quite ready for that and besides, we still had to complete all the work the team had already committed to and were hitting up against a fixed deadline.

They were trying their best but clearly, they lacked the experience of this way of working. They needed some coaching.

Introducing the Rapid Testing Playbook

You may be familiar with the concept of a “playbook” used by American football coaches to drill their players on how to employ certain in-game strategies. I thought the same approach could be used to coach Testers on how to run effective Dev/Test handover sessions. I also wanted a catchy name that would help to capture people’s imagination and get everyone focusing on faster feedback. Fortunately a former colleague – John O’Leary – had previously told me about his success in introducing his own brand of “Rapid Testing” when he first took on his new role.

The Rapid Testing Playbook was born!

1. Pair up

As soon as the code change has been made, ask for a teammate to join you for a Rapid Testing session. Team members should make themselves available to pair up straight away, so no one has to wait around. Sit together at a desk or start a video call if working remotely.

2. Timebox

Agree a time limit for the Rapid Testing session and set a timer.

3. Take Note

During the Rapid Testing session be sure to take notes of anything you discover:

- Bugs found (consider both functional and non-functional issues)

- Risks identified or assumptions being made

- Questions for the business

- Tests to run

4. Review the request

Read through the PBI and establish what prompted the change. Clarify any uncertainty with the BA.

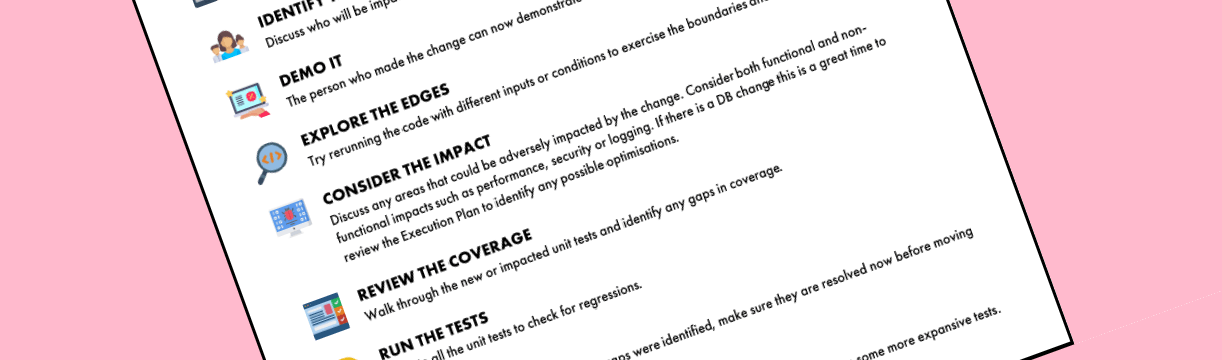

5. Identify the users

Discuss who will be impacted by the change.

6. Demo it

The person who made the change can now demonstrate how the change satisfies the PBI.

7. Explore the edges

Try rerunning the code with different inputs or conditions to exercise the boundaries and corner cases.

8. Consider the impact

Discuss any areas that could be adversely impacted by the change. Consider both functional and non-functional impacts such as performance, security or logging. If there is a DB change this is a great time to review the Execution Plan to identify any possible optimisations.

9. Review the coverage

Walkthrough the new or impacted unit tests and identify any gaps in coverage.

10. Run the tests

Execute all the unit tests to check for regressions.

11. Fix it up

If any bugs, test failures or coverage gaps were identified, try and resolve them now before moving on to something else.

12. Deploy it

After the issues are resolved, if necessary, deploy to a test environment and run some more expansive tests.

If you found this useful and want to try it for yourself, you can grab your own copy here – Rapid Testing Playbook.pdf